In the high-stakes world of mergers and acquisitions, the "multi-location" factor acts as a force multiplier. For CTOs and CIOs overseeing MSOs, dental service organizations (DSOs), veterinary groups, or national childcare chains, growth isn’t just about adding dots to a map; it’s about ensuring every single one of those dots functions as a high-performing unit from Day 1.

The reality of modern M&A is that the deal isn't done when the papers are signed; it’s done when the technology actually works at scale. Yet, in the rush to capture synergies and prove ROI to the board, IT integration is frequently relegated to the "cleanup" phase. This disconnect is where millions of dollars in deal value silently disappear.

At MellinTech, we’ve spent decades in the trenches of nationwide rollouts and site conversions. We’ve seen that the costliest mistakes aren’t usually the result of a single catastrophic server failure. Instead, they are the result of compounding, preventable oversights that multiply across 50, 200, or 500 locations.

Here is a deep dive into the seven M&A IT mistakes that cost millions and how to ensure your next integration protects the bottom line.

1. Treating IT Like a Post-Close Cleanup Project

The most common mistake in M&A is the "Wall of Silence" between the deal team and the IT team. By the time IT leadership is brought into the fold, the legal team is at the finish line, finance has already modeled the expected ROI, and operations has set aggressive transition deadlines.

When IT is brought in late, they aren't planning an integration; they are inheriting a crisis. This creates a dangerous gap between executive expectations and technical reality. Without IT involvement during the letter of intent (LOI) or early diligence phases, you are essentially buying a "black box" of technical debt.

The True Cost

Reactive Remediation: Instead of proactive upgrades, teams are forced into "break-fix" mode, paying premium rates for emergency labor.

Technical Debt: You inherit years of poor maintenance that should have been used as a lever during price negotiations.

Operational Stalling: Every week spent untangling inherited systems is a week that the "synergy" promised to the board is delayed.

The Fix: IT needs a seat at the table early enough to influence diligence and site prioritization. A "Technical Due Diligence" report should be just as influential as a financial audit.

2. Confusing a Basic Inventory with Real Discovery

Many acquirers believe they understand their new assets because they have a spreadsheet of vendors and software licenses. That is not discovery; that is an inventory list.

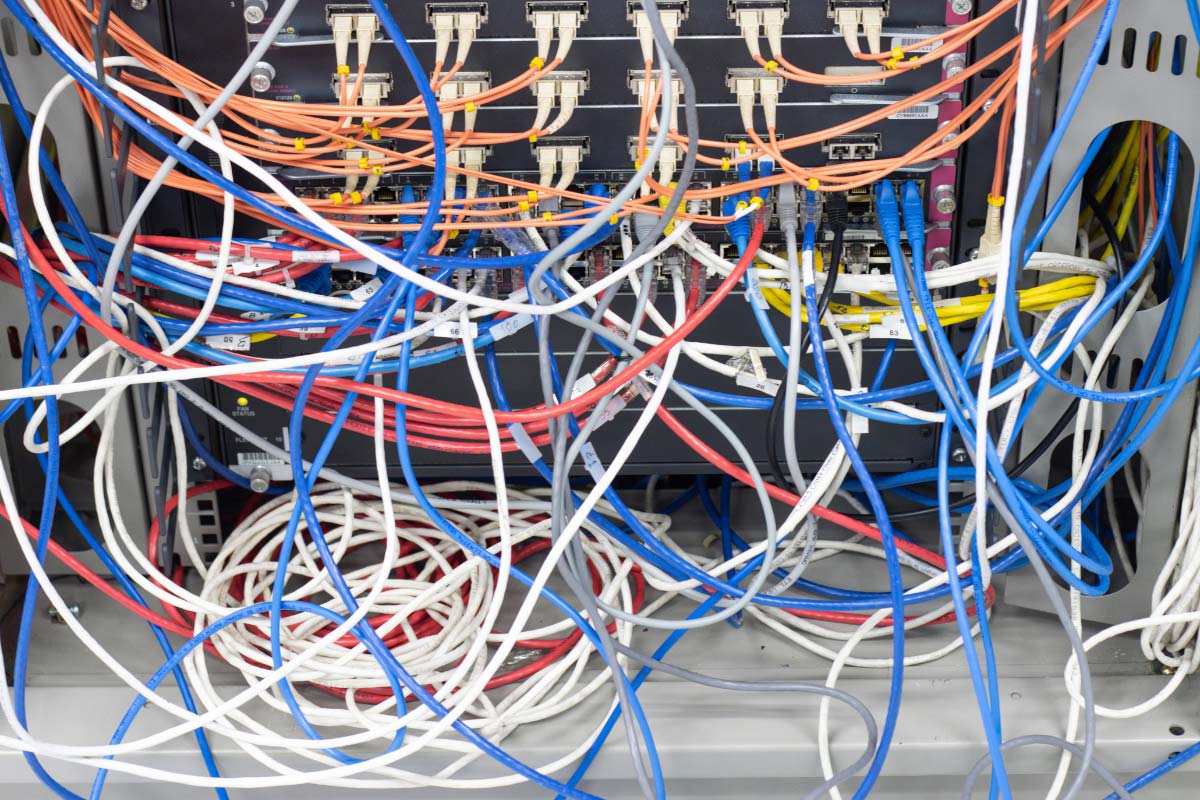

An inventory tells you what they have (e.g., "Site A uses X practice management software and Y firewall"). Real discovery tells you how it is performing. It reveals that Site A’s firewall is three versions out of date, the cabling is a "spaghetti mess" that violates fire code, and the ISP contract is tied to a local provider with no SLA.

The "Physical Layer" Blind Spot

In multi-location environments, the physical state of the facility is often the biggest variable. We frequently see organizations plan a rollout based on a spreadsheet, only for the technician to arrive on-site and find:

No available rack space in the server closet.

Insufficient power or cooling for new hardware.

Cabling that is poorly labeled or failing, making the "simple install" a two-day project.

The Fix: Go deeper than the software layer. True discovery must document the physical layer: rack conditions, cable health (Cat5e vs. Cat6), Wi-Fi heat maps, and "shadow IT" workarounds created by local staff to bypass broken systems.

Is your next acquisition hiding technical debt? Don’t rely on a spreadsheet to protect your deal value. MellinTech provides professional Site Discovery and Audits that uncover the physical realities before they become expensive problems.

3. Building Integration Plans from HQ Instead of the Field

High-level integration plans look flawless in a boardroom. In a conference room, it’s easy to say, "We will convert 15 sites per week." In the field, however, every location has a unique story.

Multi-location organizations are notorious for site-level variation. A clinic in a legacy building from the 1950s has vastly different infrastructure needs than a DeNovo site in a modern retail strip. When plans ignore these differences, the business pays through:

Repeat Site Visits: "Truck rolls" are the silent killer of IT budgets. If a tech has to go back because the site wasn't ready, you’ve doubled your labor cost.

Delayed Cutovers: Every failed cutover is a day of lost production and frustrated providers.

Emergency Procurement: Buying hardware at retail prices locally because the "standard" kit didn't fit the site's unique constraints.

The Fix: Develop "Site-Readiness Standards." No site enters the conversion queue until a field-verified readiness report confirms the foundation can support the new standard.

4. Prioritizing Applications While Neglecting Infrastructure

It’s easy to get excited about a new EMR, ERP, or cloud-based practice management system. These are the tools the C-suite sees and uses. However, infrastructure—the "invisible" layer of cabling, switches, and circuits—is the highway those tools travel on.

If the network is unstable or the cabling is failing, your expensive new software will underperform. We often see organizations spend millions on a software migration only to have users complain that "the system is slow." In reality, 90% of the time, the software isn't the problem; the infrastructure is.

The Foundation Checklist:

| Component | Risk of Neglect |

|---|---|

| Structured Cabling | Packet loss, slow speeds, and hardware connectivity failures. |

| PoE Capacity | New VoIP phones or security cameras won't power on. |

| Wi-Fi Design | Dead zones in clinical areas leading to data entry delays. |

| Telecom/Circuits | Inadequate bandwidth for cloud-heavy applications. |

The Fix: Allocate a dedicated percentage of the M&A budget specifically for infrastructure remediation. It’s not an "extra". It’s the prerequisite for a functional Day 1.

5. Overlooking the "Vendor & Contract Gap"

In the chaos of an acquisition, ownership of hardware and services often falls through the cracks. Who owns the warranty on the servers at the new 50 locations? Is the firewall license expiring next Tuesday?

When these questions are left unanswered, the organization inherits massive operational risk. A failed device at a critical site might no longer be under support, turning a 2-hour fix into a 3-day outage while you wait for new hardware procurement.

Risks to Identify Early:

License Lapses: Sudden loss of security patches or software access.

Contractual Overlap: Paying for two different ISPs or VOIP providers because the legacy contract wasn't officially terminated.

Carrier Lock-in: Inheriting "predatory" local contracts with long-term commitments and no exit clauses.

The Fix: Conduct a structured audit of all carrier agreements, hardware support status, and licensing ownership before the transition begins. Assign a dedicated project manager to handle vendor "cut-overs" and cancellations.

Stop forcing your internal IT team to choose between maintenance and growth. We fill the gap, acting as your boots on the ground so your leadership can stay focused on the big picture.

6. The "One-Size-Fits-All" Timeline Trap

Private equity backers and boards often demand speed. They want the integration done yesterday. This pressure frequently leads to a "flat" timeline where every site is scheduled for conversion in a linear, rigid fashion.

The problem? Not every site is ready. Treating a site with major infrastructure debt the same as a "clean" site is a recipe for disaster. If you force a site into a timeline it can't support, you will experience a "rolling fire drill" where every week is spent putting out fires from the previous week's botched rollout.

A Smarter Approach: Phased Execution

Tier 1 (Green): Sites that are "standard-ready" and can be converted immediately.

Tier 2 (Yellow): Sites requiring minor remediation (e.g., adding an AP or rack cleanup).

Tier 3 (Red): High-risk sites requiring full infrastructure overhauls before software is touched.

The Fix: Sequence your rollout based on Technical Readiness, not just geographic proximity or acquisition date.

7. Calling the Project "Done" at Cutover

Many organizations celebrate when the new software is "live" at all locations. But a successful integration is measured by stability, not just activity.

A site can be "live" but have dozens of unresolved exceptions. Documentation might be missing, and the local staff might be relying on "temporary" workarounds that eventually become permanent, insecure habits. This is how "Day 100" problems are born; issues that linger long after the integration team has moved on to the next deal.

The "Stability" Checklist:

Closeout Documentation: Does your IT team have a "Source of Truth" for every site (rack photos, port maps, circuit IDs)?

Exception Closure: Have all the "we'll fix this later" items actually been addressed?

User Proficiency: Are the staff using the environment as intended, or have they reverted to old, inefficient processes?

The Fix: Define success as "Site Stability." Don't close the project until the site has operated for 30 days without high-priority tickets and the documentation is 100% complete and handed off to the internal support team.

Why "Flex Capacity" is the Solution for High-Growth Groups

For multi-location organizations, the "flex capacity" needed to execute these integrations is often beyond the scope of a standard internal IT team. Your internal team is likely optimized for maintenance, not for the burst capacity required to convert 50 sites in 90 days.

This is where a specialized partner like MellinTech changes the game. We act as an extension of your IT leadership, filling the gaps in your team’s capacity and specialized field knowledge.

The MellinTech Difference:

Technology Consultation & System Design: We help bridge the gap between executive strategy and field-level execution.

Structured Cabling: We ensure the physical foundation is robust enough for your software to perform.

Nationwide Rollouts & Site Conversions: We handle the complex logistics of multi-site integrations so your team can focus on core business initiatives.

M&A Support & DeNovo Builds: Whether you are acquiring assets or building new ones, we provide the consistent standard required for scale.

The most expensive M&A IT mistakes are rarely the most dramatic ones. They are the preventable, structural failures that multiply across locations and timelines. For multi-location groups, that multiplication is where the real value is either protected or lost.

The goal is not just to "finish" an integration. It is to build a reliable, scalable environment that allows your organization to grow without technical friction. When you get the infrastructure right, IT stops being a post-close drag and becomes what it should be: a driver of long-term integration success.

Is your infrastructure ready for your next acquisition? Don't wait for a botched rollout to find out. Let’s talk about building a scalable, predictable IT roadmap for your multi-location organization.